What is an AI agent?

An AI agent is a software system that observes a situation, reasons about what should happen next, and takes action toward a goal. In a business setting, that action may be as simple as routing a ticket or as complex as gathering context from a CRM, drafting a response, checking policy, asking for approval, and updating a workflow tool.

The important difference between an AI agent and a simple prompt is continuity. A prompt gives one response. An agent operates inside a loop: perceive, decide, act, evaluate, and improve the next step. The loop may be rule-based, model-driven, goal-driven, optimized for trade-offs, or able to learn from feedback.

Quick answer: the main types of AI agents

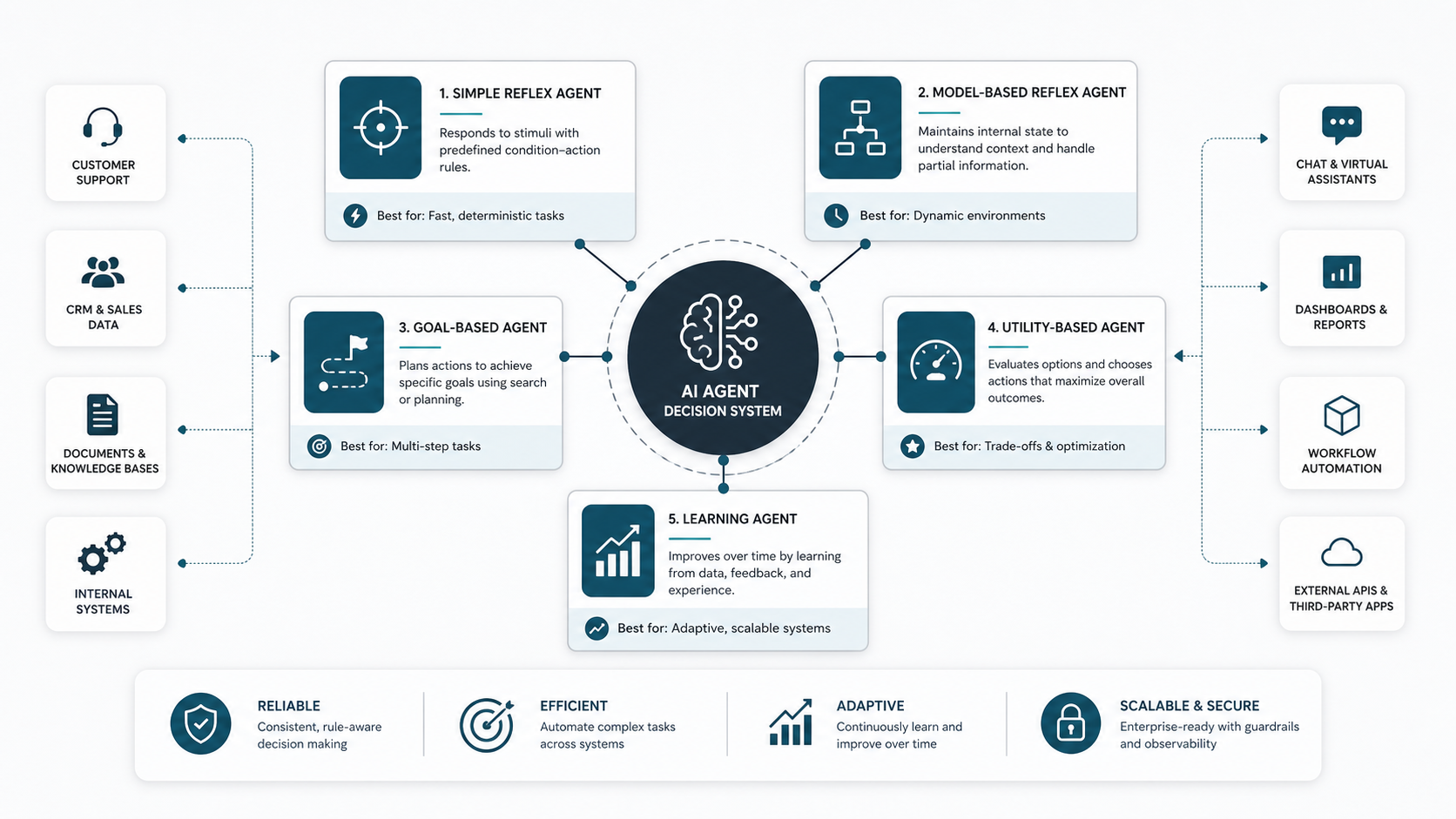

Most practical taxonomies start with five core AI agent types: simple reflex agents, model-based reflex agents, goal-based agents, utility-based agents, and learning agents. Modern implementations often add multi-agent systems and hierarchical agents when work needs coordination across roles, tools, or decision layers.

- Simple reflex agents react to the current input using fixed condition-action rules.

- Model-based reflex agents maintain an internal model of the world so they can act with context.

- Goal-based agents compare possible actions against a defined outcome.

- Utility-based agents score options and choose the action with the best expected value.

- Learning agents improve behavior through feedback, data, evaluation, or retraining.

- Multi-agent systems split work across multiple specialized agents.

- Hierarchical agents use manager-worker layers to plan, delegate, and execute complex work.

AI agent types comparison table

| Agent type | Best fit | Memory or context need | Example business use | Main limitation |

|---|---|---|---|---|

| Simple reflex | Clear, repetitive decisions | Low | Route a support ticket by keyword or form field | Fails when context changes or inputs are ambiguous |

| Model-based reflex | Decisions that need recent state | Medium | Prioritize follow-ups based on account history and open tasks | Depends on the quality of the maintained model |

| Goal-based | Tasks with a specific target outcome | Medium to high | Plan the steps needed to qualify a lead or resolve a request | May choose inefficient paths without scoring trade-offs |

| Utility-based | Trade-off-heavy decisions | High | Choose the best retention offer based on cost, risk, and value | Requires well-designed scoring criteria |

| Learning | Workflows that improve from feedback | High | Improve document classification from reviewer corrections | Needs data, evaluation, and governance |

| Multi-agent | Large workflows with specialized roles | High | Research, draft, review, and publish a knowledge-base article | Coordination and observability become harder |

| Hierarchical | Complex planning and delegation | High | Break a customer onboarding project into tasks for multiple systems | Requires strong orchestration and clear escalation rules |

Simple reflex agents

A simple reflex agent maps the current input directly to an action. It does not remember past events or reason about long-term outcomes. This makes it fast, predictable, and easy to test.

Use this pattern when the environment is stable and the rule is obvious: if the invoice amount is missing, ask for the invoice again; if the ticket contains a known outage code, route it to the infrastructure queue; if a form is incomplete, return it for correction.

The risk is brittleness. A simple reflex agent cannot handle nuance unless every relevant condition has already been encoded. For many companies, this is still a useful first step because it automates low-risk decisions before an LLM or tool-using agent is introduced.

Model-based reflex agents

A model-based reflex agent keeps an internal representation of the current situation. That model might include user preferences, workflow state, prior messages, open tickets, account status, inventory levels, or policy constraints.

This type is helpful when the next action depends on what happened before. A customer success agent, for example, may need to know that a renewal call is already scheduled before sending another reminder. A procurement assistant may need the latest approval status before escalating a purchase request.

The core implementation challenge is state quality. If the internal model is stale or incomplete, the agent may make confident but wrong decisions. Good integrations, data freshness checks, and fallback behavior matter more than clever prompts.

Goal-based agents

A goal-based agent evaluates actions by asking whether they move the system closer to a target. The target can be simple, such as closing a support ticket, or more involved, such as preparing a sales proposal with required pricing, approvals, and technical assumptions.

Goal-based agents are useful when the path is not fixed. They can plan steps, call tools, inspect results, and adjust. In agentic AI applications, this is often where LLMs become valuable because they can reason over context and decide which tool or workflow step should happen next.

The limitation is that not every path to a goal is equally good. Without cost, risk, time, or quality criteria, a goal-based agent may reach the destination inefficiently. For business workflows, that often means adding utility scoring or human review.

Utility-based agents

A utility-based agent compares possible actions and chooses the one with the highest expected value. It does not only ask, "Will this achieve the goal?" It asks, "Which option produces the best outcome under the constraints?"

This matters when trade-offs are real. A finance agent may balance fraud risk, customer value, and approval time. A logistics agent may balance delivery cost, SLA risk, and stock availability. A sales agent may choose between speed, personalization, and compliance.

The hard part is defining utility honestly. The scoring model needs business input, risk thresholds, and monitoring. If the utility function is poorly designed, the agent may optimize the wrong thing beautifully.

Learning agents

A learning agent improves from experience. In practice, learning may come from human feedback, labeled examples, evaluation results, retrieval improvements, prompt changes, model fine-tuning, or policy updates. The agent does not need to retrain itself automatically to be useful; it needs a measurable improvement loop.

Learning agents are strong candidates for document classification, recommendation, triage, personalization, anomaly detection, and workflows where reviewer corrections are available. They are also higher responsibility systems because errors can compound if feedback is noisy or incentives are wrong.

For a production business system, treat learning as an operating model. Define what feedback is captured, who approves changes, how regressions are detected, and how the agent is rolled back if performance drops.

Multi-agent and hierarchical agents

Multi-agent systems divide work between specialized agents. One agent may research, another may draft, a third may validate policy, and a fourth may update the destination system. Hierarchical agents add a manager layer that plans work, delegates subtasks, and checks completion.

This pattern is useful for long workflows that touch multiple systems or require different skills. It can also make governance easier when each agent has a narrow role and scoped permissions.

The trade-off is coordination complexity. Multi-agent systems need clear ownership, shared state, observability, retry behavior, and escalation rules. Without those controls, the architecture can become slower and harder to debug than a simpler agent.

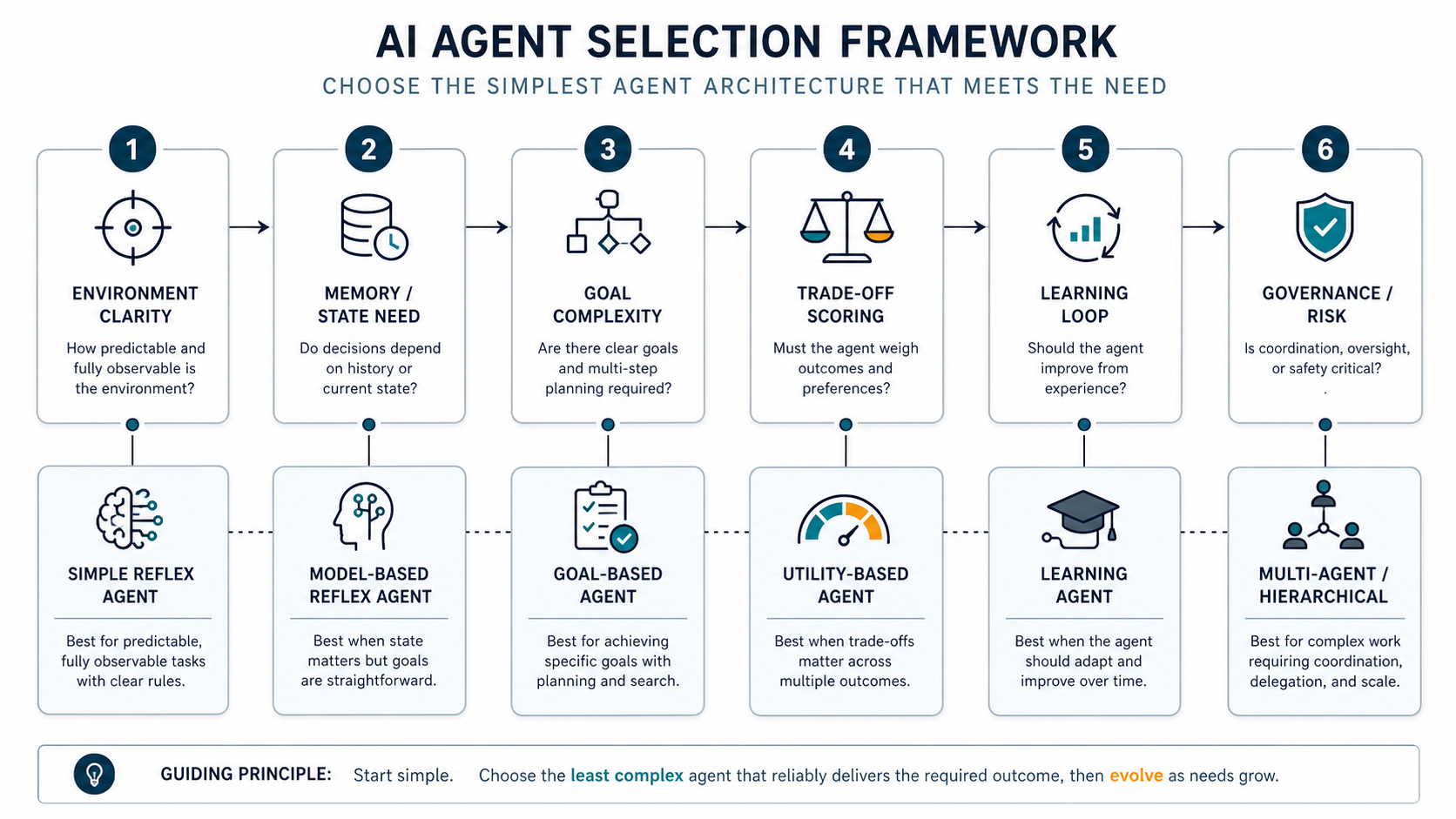

AI agent selection framework

Start with the workflow, not the technology label. If the environment is predictable and the decision can be expressed as a rule, use a simple reflex agent. If the agent needs state, use a model-based pattern. If it must plan toward an outcome, use a goal-based agent. If multiple acceptable actions exist and the best one depends on cost, risk, speed, or customer value, add utility scoring. If the workflow should improve over time, design a learning loop. If the work requires multiple roles or tools, consider a multi-agent or hierarchical architecture.

A good selection question is: what would make this agent fail in production? If the answer is missing context, prioritize memory and integrations. If the answer is unclear priorities, define utility. If the answer is unsafe autonomy, add approvals and observability before adding more capability.

Business examples by function

AI agents become valuable when they are attached to real systems of work. In customer support, agents can triage tickets, retrieve policy, draft responses, and escalate edge cases. In sales, they can enrich leads, prepare account briefs, update CRM records, and schedule follow-ups. In finance, they can classify invoices, flag exceptions, and route approvals. In operations, they can monitor queues, detect anomalies, and coordinate handoffs.

The same taxonomy applies across these functions. A simple reflex rule may be enough for a routing step. A model-based agent may be needed for account-aware follow-up. A utility-based agent may be appropriate when several actions are valid but one creates a better business outcome. A learning agent may fit when reviewers already correct decisions and those corrections can improve the next version.

Governance checklist before building

Before building an AI agent, define its boundary. What systems can it read? What systems can it write to? Which actions require human approval? What data is sensitive? What logs are needed for audit? What happens when a tool fails? What should the agent do when confidence is low?

For most business agents, production readiness depends on scoped permissions, test datasets, evaluation criteria, prompt and policy versioning, fallback behavior, monitoring, and a clear owner. These controls are not paperwork. They are what make an agent dependable enough to use outside a demo.

How NextPage approaches AI agent development

NextPage treats AI agents as software systems, not isolated prompts. A practical build usually starts with workflow discovery, data and integration mapping, risk review, prototype design, evaluation, human-in-the-loop controls, deployment, and ongoing improvement.

The best first agent is often narrow: one workflow, clear success criteria, limited permissions, and measurable outcomes. After that system is stable, it can be expanded with more tools, richer memory, or additional specialized agents.

If you are comparing agent architectures for a product, internal workflow, or customer-facing automation, NextPage can help turn the taxonomy into an implementation plan: what to automate first, what to keep human-reviewed, what data needs cleanup, and what architecture gives you the safest path to value.